[Image from the University of Illinois]

Coordinated Science Lab (CSL) researchers at the University of Illinois have mathematically figured out how the brain times impulses that are sent out due to a sensory stimulus.

“There was a major question in neuron function that had not been answered,” Rama Ratnam, a senior research scientist at Illinois, said in a press release. “What is the neural code? That is, how is a sensory signal from the external world converted or coded in the form of a neural signal? Oddly enough, we do not really know.”

Neural code isn’t designed by humans and therefore has been hard for engineers to understand. Normal coding doesn’t have any mysteries to it because the engineers have developed it themselves.

“The neural code evolved over evolutionary time scales, possibly 550–650 million years ago when box and comb jellies first came into being,” said Ratnam. “What makes the neural code hard to understand is that the encoding is not in a form that is analog or digital. We wonder, how do the neurons in the brain translate and interpret the sensory signal? Mechanically, when we hear sound, how do we know who’s saying it, what they’re saying and how they’re saying it in real-time?”

The researchers were tasked with figuring out how sensory neurons encode and decode. Usually, artificial systems encode and decode the same way. For example, a computer can’t encode a JPEG and then decode it as an MP3 file.

“You can’t swap one format for the other in artificial systems. They have to be closely coupled,” Erik Johnson, former CSL and ECE graduate student, said. “Neuron encoding and decoding has to be closely coupled as well.”

The researchers suggest that neurons encode their input as impulses and decodes the impulses at the same time so that the quality can be compared.

“This is a radical departure from the recognized view of neural coding where encoding and decoding take place in separate neurons,” Ratnam said.

Neurons have the most effective strategy when it comes to encoding and transmitting analog signals using impulse generation rates to control the fidelity of the coding, according to the researchers. Fidelity is measured using an internal decoding process called threshold mechanism.

An output from the neurons is regulated which helps control the average rate of impulses and energy consumption of neurons. Because of this, encoding and decoding are as energy-efficient as possible. A neuron encodes a signal with the highest fidelity for any rate of impulse generation in a way that is like source coding and data compression.

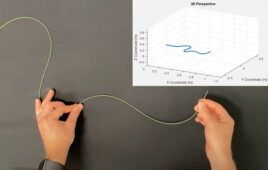

Using this information, the researchers created an optimum mathematical code that times the impulses that are output by neural codes.

“There is a mathematically optimum strategy for coding and transmitting signals in the most ideal way, and neurons have evolved biophysical mechanisms to find that way,” Ratnam said. “Neurons are not noisy or unreliable coders as is believed. They are precise devices and they encode information with the fidelity needed for a given physiological function.”

Without digital coding and data compression in the body, the brain would be overflowing with neurons and become too big and use too much metabolic energy, according to the researchers.

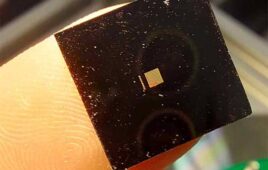

“With this knowledge, we can work toward creating prosthetics to replace damaged sensory organs—could be eyes, ears, anything,” Ratnam said. “If we can generate the optimum way of representing the signals to stimulate the neurons in the brain, it could make the brain think it hasn’t lost anything.”

The researchers plan to use this understanding of neuron encoding and decoding to create prosthetics that send sensory signals to the brain in the same manner that normal organs do, hopefully creating functionality in parts that have been lost.

The research was published in the Journal of Computational Neuroscience and Frontiers in Computational Neuroscience.

(See the best minds in medtech live at DeviceTalks Boston on Oct. 2.)