Augmented reality (AR) is causing quite a stir. Whether it is Microsoft HoloLens, Google Glass, or even Pokémon Go, the concept of digitally enhancing and enriching the world around us has captured the imagination of millions. While augmented reality products have for the most part occupied the consumer gaming space, product design, and development firm, Cambridge Consultants is exploring how AR can transform the operating room. Developing AR technology capable of being used in a medical setting, however, brings a fundamentally different set of requirements. The maxim is always “How do we deliver clinical benefit?”

Minimally invasive surgery (MIS) – also known as keyhole or laparoscopic surgery – is often complex surgery, yet, is performed through tiny incisions instead of one large opening. The clinical benefit of MIS has been shown countless times. However, the information required by the surgeon and team during these procedures has traditionally proven difficult to access in an intuitive way.

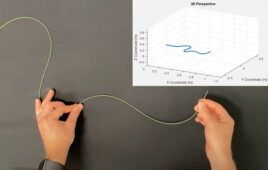

An example given to me recently involved the difficulty of placing laparoscopic tools during a procedure to remove kidney stones. In this scenario, a specialist radiographer would interpret the two-dimensional CT scan data to direct the tool into the patient’s kidney, and then hand over to the surgeon to perform the removal of the kidney stones. All too often, though, the tool might not end up in the optimal position for the surgeon, or at the best angle. It would lead to better outcomes if the surgeon could see the location of the kidney and tool in real-time and in the real three-dimensional space of the patient, removing the need for the mental transformation of data into the real world.

The next-generation AR system has the promise of providing a real-time 3D interactive perspective of the inside of the patient, accurately guiding the surgeon in ways not previously possible.

Cambridge Consultants explored how close we might be to seeing that promise realized with current commercially available augmented reality equipment. We obtained a Development Edition of Microsoft’s HoloLens, and set about discovering its benefits and limitations for use in a surgical environment. The HoloLens is a self-contained Windows 10 PC worn on the user’s head, much like a large pair of wrap‑around glasses. Being self-contained means it does not have any cables restricting the user’s movement. It has sensors capable of detecting the user’s environment (primarily walls, floor and stationary objects like tables), interpreting gestures from the user’s hands when held out in front, and tracking where the user’s head is located and in which direction it is pointed. All of this combines to make it possible to display a virtual object so that it stays in a fixed location in 3D space while the user moves around.

.jpg)

(Credit: Cambridge Consultants)

To assess the suitability of AR technology in the operating room, we created a software application envisioning the future of surgery. We started with an accurate anatomical surface model of the internal components of a torso from the BodyParts3D project, separating out the different overlapping systems – skeletal, muscular, respiratory and digestive – and applied bright colours to easily distinguish them. Once the model is positioned within a real-world mannequin – simulating our patient on an operating table – the user is able to move around and view the patient from any angle, and can use voice commands to control what is shown at any point in time.

In addition to the anatomical information being displayed in-situ within the patient, the surgeon has a number of virtual monitors, each showing different key information. The patient’s vital signs are constantly shown on one, medical notes on another, and yet another with traditional 2D scan data. The surgeon can move these around using hand gestures and voice commands, allowing the surgeon’s hands to remain sterile and the monitors to be placed in locations impossible for a physical monitor. The vital signs monitor has audible beeps and alarms which appear to come from where the monitor is placed, just as a physical monitor would.

This all adds up to a rather convincing demonstration – the way the virtual objects remain rock solid while the user moves around is impressive, and the spatial sound is superb – but is it something that is ready to be used when a patient’s life depends on it? The short answer is not yet.

The most obvious, and widely talked about, shortcoming is the narrow field of view. This is sufficient to render the whole torso visible at two metres away, but when the surgeon steps in to arm’s reach the image begins to be cropped at the edges, forcing the surgeon to move their head in an unnatural way to keep the important information in the centre of their view, causing neck strain and reducing the amount of information that can be seen.

Another disappointing area was the lack of access to information about the user’s hands within the sensor’s field of view, which is thankfully larger than the projected image. Although the HoloLens uses technology originating in the Kinect, access to the same level of information showing the position and state of the users is not available, instead choosing to simplify this into two or three gestures analogous to a mouse click, or pressing the escape key on a keyboard. This means tracking a surgeon’s hands, or the tools used during a procedure, would require additional hardware and sensors.

There are a few other areas which also require improvement to meet the demanding environment of an operation room – the amount of detail which can be rendered quickly needs to be improved to allow the image to stay exactly in position while the user’s head moves, the headset needs to get lighter to reduce neck strain when looking down at the operating table, and the physical design needs to be capable of being sterilised.

By far the biggest challenge to using augmented reality to achieve the dream of intraoperative overlay relies on solving hard problems apart from the visualization. Whilst AR technology can anchor content to a given location, how do we know the true location of patient anatomy? Optical techniques to find the human form work well, but what of the soft-tissue inside? Non‑optical, real-time imaging methods like those being developed at Cambridge Consultants may well hold the answer but there is still significant work to be done before we can register pre-operative data to a patient, or register the real-time information with sufficient accuracy.

All in all, the challenges that prevent AR being viable in the OR remain but are by no means insurmountable but there is much work yet to be done.