Researchers at MIT and Boston Children’s Hospital have developed a system that can take MRI scans of a patient’s heart and, in a matter of hours, convert them into a tangible, physical model that surgeons can use to plan surgery.

The models could provide a more intuitive way for surgeons to assess and prepare for the anatomical idiosyncrasies of individual patients. “Our collaborators are convinced that this will make a difference,” says Polina Golland, a professor of electrical engineering and computer science at MIT, who led the project. “The phrase I heard is that ‘surgeons see with their hands,’ that the perception is in the touch.”

This fall, seven cardiac surgeons at Boston Children’s Hospital will participate in a study intended to evaluate the models’ usefulness.

Golland and her colleagues will describe their new system at the International Conference on Medical Image Computing and Computer Assisted Intervention in October. Danielle Pace, an MIT graduate student in electrical engineering and computer science, is first author on the paper and spearheaded the development of the software that analyzes the MRI scans. Medhi Moghari, a physicist at Boston Children’s Hospital, developed new procedures that increase the precision of MRI scans tenfold, and Andrew Powell, a cardiologist at the hospital, leads the project’s clinical work.

The work was funded by both Boston Children’s Hospital and by Harvard Catalyst, a consortium aimed at rapidly moving scientific innovation into the clinic.

MRI data consist of a series of cross sections of a three-dimensional object. Like a black-and-white photograph, each cross section has regions of dark and light, and the boundaries between those regions may indicate the edges of anatomical structures. Then again, they may not.

Determining the boundaries between distinct objects in an image is one of the central problems in computer vision, known as “image segmentation.” But general-purpose image-segmentation algorithms aren’t reliable enough to produce the very precise models that surgical planning requires.

Human Factors

Typically, the way to make an image-segmentation algorithm more precise is to augment it with a generic model of the object to be segmented. Human hearts, for instance, have chambers and blood vessels that are usually in roughly the same places relative to each other. That anatomical consistency could give a segmentation algorithm a way to weed out improbable conclusions about object boundaries.

The problem with that approach is that many of the cardiac patients at Boston Children’s Hospital require surgery precisely because the anatomy of their hearts is irregular. Inferences from a generic model could obscure the very features that matter most to the surgeon.

In the past, researchers have produced printable models of the heart by manually indicating boundaries in MRI scans. But with the 200 or so cross sections in one of Moghari’s high-precision scans, that process can take eight to 10 hours.

“They want to bring the kids in for scanning and spend probably a day or two doing planning of how exactly they’re going to operate,” Golland says. “If it takes another day just to process the images, it becomes unwieldy.”

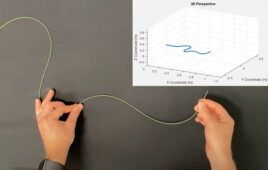

Pace and Golland’s solution was to ask a human expert to identify boundaries in a few of the cross sections and allow algorithms to take over from there. Their strongest results came when they asked the expert to segment only a small patch –one-ninth of the total area — of each cross section.

In that case, segmenting just 14 patches and letting the algorithm infer the rest yielded 90 percent agreement with expert segmentation of the entire collection of 200 cross sections. Human segmentation of just three patches yielded 80 percent agreement.

“I think that if somebody told me that I could segment the whole heart from eight slices out of 200, I would not have believed them,” Golland says. “It was a surprise to us.”

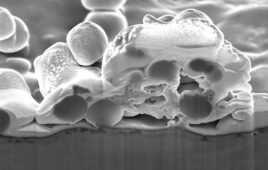

Together, human segmentation of sample patches and the algorithmic generation of a digital, 3-D heart model takes about an hour. The 3-D-printing process takes a couple of hours more.

Prognosis

Currently, the algorithm examines patches of unsegmented cross sections and looks for similar features in the nearest segmented cross sections. But Golland believes that its performance might be improved if it also examined patches that ran obliquely across several cross sections. This and other variations on the algorithm are the subject of ongoing research.

The clinical study in the fall will involve MRIs from 10 patients who have already received treatment at Boston Children’s Hospital. Each of seven surgeons will be given data on all 10 patients — some, probably, more than once. That data will include the raw MRI scans and, on a randomized basis, either a physical model or a computerized 3-D model, based, again at random, on either human segmentations or algorithmic segmentations.

Using that data, the surgeons will draw up surgical plans, which will be compared with documentation of the interventions that were performed on each of the patients. The hope is that the study will shed light on whether 3-D-printed physical models can actually improve surgical outcomes.