This was the dream: We would use technology to create a seamless health care system, one where people, computers and machines would work together to improve patient care in many different ways. Healthcare would be more efficient, it would be safer, it would be less expensive, we would be able to transfer health-related information quickly and accurately.

After spending three days at a meeting recently with some of the top experts in the field, I am not so certain that the dream is going to come true anytime soon. Perhaps more concerning, the problems — including patient safety issues — that are cropping up in so many areas are very troubling.

After spending three days at a meeting recently with some of the top experts in the field, I am not so certain that the dream is going to come true anytime soon. Perhaps more concerning, the problems — including patient safety issues — that are cropping up in so many areas are very troubling.

The meeting was organized by three federal agencies involved in the oversight of medical devices, applications, and health information technology including the Food and Drug Administration, the Office of the National Coordinator for Health Information Technology, and the Federal Communications Commission. Those three agencies recently released a report describing their vision for regulation of health information technology. The purpose of the meeting was to extend the discussion.

I came away with a sense that this social-technical ecosystem (their term, which I thought was actually very interesting) that we call health information technology is far more complex and the problems more pervasive than a lot of us understand — even those who work in healthcare and use these systems every day. The inevitable question is what would people think if they really knew how serious the issues are? More importantly, who is going to fix them?

As a consumer of health care, you probably assume that when you go to your doctor’s office or receive care in a hospital there is a reasonable certainty that the computer systems your hospital and doctor rely on are up to date and work as intended. Well, hopefully, most of the time they do. But how do we even know when they don’t? How do we know they are tested to be safe? How do we know if the latest upgrades have been installed? The answer is apparently we don’t because they aren’t.

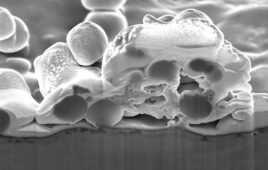

For example, the computer programs that these systems rely on are built layer upon layer over years. They are customized in many instances. But the people who provided the original architecture for a particular system — sometimes a long time ago — aren’t around and there is no simple record of what they did. And then we put all sorts of new programs in place on top of the old programs — but they can use different computer languages and don’t always play nice with each other. One hospital computer specialist told us their hospital installed over 600 new applications into their system in just one year. Others told of the complexities of making sure the systems work well together — and that they often don’t. One unsettling theme mentioned frequently was the fact that some of these situations can become dangerous, especially if the appropriate compatibility and error testing is not done.

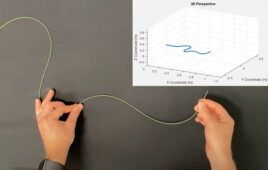

Then we get to the machines that are computer driven, and are supposed to integrate within these systems. Most of the time they work, sometimes they don’t. One physician made the point that even setting the time correctly between all the systems and machines is problematic. (And that was while we were sitting in an auditorium that had two “atomic clocks” blinking at us with the absolute, undeniable officially government sanctioned correct time. By the way, we all checked and set our watches accordingly.)