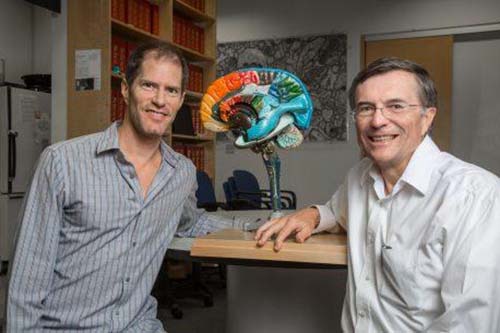

These are Salk researchers David Peterson and Terrence Sejnowski (Credit: Salk Institute)

If two clinicians observe the same patient with blepharospasm — uncontrollable muscle contractions around the eye — they’ll often come away with two different conclusions on the severity of the patient’s symptoms. That’s because the rating scales for blepharospasm are notoriously subjective and unreliable.

Now, in an attempt to provide a more objective scale for research and diagnosis, scientists at the Salk Institute have developed a computer program that takes over the job, analyzing videos of patients’ faces. The program could eventually be expanded to help study facial tics and twitches in other contexts, including Tourette Syndrome, schizophrenia, and Parkinson’s disease. The research was described online on October 21, 2016 in Neurology, the medical journal of the American Academy of Neurology.

“The field of neurology has a long tradition of making clinical decisions based on careful observations. We hope to supplement that expertise by leveraging advances in computer vision and machine learning,” says Terrence Sejnowski, head of Salk’s Computational Neurobiology Laboratory and senior author of the new work.

Blepharospasm involves abnormal and involuntary twitching of the eyelid and surrounding muscles. Often, its cause is unknown, but the spasms can be triggered by fatigue or stress and are also associated with certain drugs, hormone changes and many other disorders, including multiple sclerosis. To help with research into the underlying causes, as well as trials of potential treatments, scientists have previously developed three different rating scales that can be used to put a number on blepharosplasm severity, but studies have shown that the ratings have a high level of variability.

“They’re inherently subjective because they’re based on human judgment,” says David Peterson, a project scientist at Salk and the University of California, San Diego and first author of the new paper. “And when these measures are used either to optimize treatment in clinical care or during studies of the condition, that variability introduces error.”

Sejnowski, Peterson and collaborators customized existing facial analysis software called Computer Expression Recognition Toolbox (CERT) to make the ratings objective. In the past, CERT has been used to analyze facial expressions as they relate to emotions. The team modified the program to quantify how often a patient’s eyes closed when they were instructed to keep them open. They tested the program using 49 existing videos of patients with blepharospasm who had already enrolled in a nationwide research program and been recorded while following a set of commands to open and close their eyes. The records included ratings of the blepharospasm that had been given by doctors who saw the patients. Additionally, Peterson’s coauthors in the study included a set of experienced clinicians who rated the severity of each patient’s eye spasms by watching the same video being analyzed by CERT.

The new program was able to find a patient’s face in 100 percent of the video frames for 46 of the 49 patients–in the 3 other cases, it identified the face in more than 93 percent of video frames. CERT’s measure of severity, given as a percentage of eye closure time, correlated with the ratings given by both live clinicians as well as those who watched the video. The correlations varied slightly, but that was expected because of the natural variation in the standard rating scales, the researchers say. “Next, we have some further software development that has to happen to make the program more automated, and it needs to be validated with larger cohorts,” says Peterson.

Once validated for use with blepharospasm, Peterson points out that CERT could be adapted to be used for other disorders that involve abnormal movements and muscle contractions in the face.