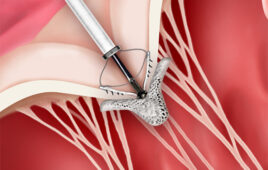

Superconductors are most commonly used in magnetic resonant imaging. (Credit: Volt Collection/Shutterstock)

In the 1970s, physicists proposed a theory that superconductivity could be induced at the point where two different non-superconductive materials are enjoined, the interface.

Several decades later, scientists have for the first time successfully demonstrated the concept. The breakthrough promises to propel the commercial viability of superconductors.

“Superconductivity is used in many things, of which MRI, magnetic resonance imaging, is perhaps the best known,” Paul C.W. Chu, chief scientist at the Texas Center for Superconductivity at the University of Houston, said in a news release.

Superconductors, unlike semiconductors, carry electricity without resistance. But superconductors must be super-cooled, which requires a lot of energy and makes the technology quite expensive.

The latest research proves it is possible to raise the “critical temperature” at which non-superconducting materials become a superconductor. Researchers were able to induce superconductivity in the non-superconducting compound calcium iron arsenide.

As researchers explained in a new paper on the breakthrough — published in the journal PNAS — the key to inducing superconductivity is to “take advantage of artificially or naturally assembled interfaces.”

Superconductivity at a higher critical temperature can be “induced by antiferromagnetic/metallic layer stacking,” researchers wrote.

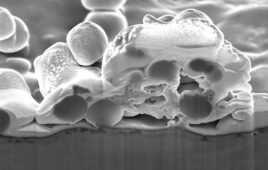

In experiments aimed at validating a decades-old theory, researchers exposed undoped calcium iron arsenide compounds to temperatures of negative 350 degrees Centigrade. The process, called annealing, causes the material to form two phases — one “converted” and one “annealed,” each featuring its own uniquely augmented internal structure.

Researchers confirmed superconductivity at the interface where the two phases coexist.

Negative 350 degrees Centigrade is still pretty low, researchers admit, but it is a step — a promising one — in the right direction.