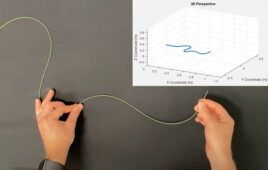

PupilScreen aims to allow anyone with a smartphone to objectively screen for concussion and other brain injuries on the spot — whether on the sidelines of a sports game or at an accident site. [Image from Dennis Wise/University of Washington]

Researchers at the University of Washington are currently working to develop a smartphone app that can detect brain injuries when they happen. The app’s goal is to detect the injuries on the sidelines of sports games, on the battlefield or in the home of an elderly person who is prone to falling.

The app, known as PupilScreen, is designed to detect changes in how the pupil of the eye reacts to light using a smartphone’s video camera in combination with deep learning tools. The deep learning tools, which are a type of artificial intelligence, help quantify changes that are unable to be seen by the human eye.

Light reflexes in the pupil of the eye have been used as an indicator of severe traumatic brain injury for awhile and the researchers say that it can be used to detect milder concussions.

Computer scientists, electrical engineers and medical researchers at the University of Washington have shown that PupilScreen can detect significant traumatic brain injury. Now, a broader clinical study will allow coaches, emergency medical technicians, doctors and others to use PupilScreen at their discretion to gather more data on pupillary response characteristics that are most helpful in determining concussions.

“Having an objective measure that a coach or parent or anyone the sidelines of a game could use to screen for concussion would truly be a game-changer,” Shwetak Patel, the Washington Research Foundation Endowed Professor of Computer Science & Engineering and of Electrical Engineering at the University of Washington, said in a press release. “Right now the best screening protocols we have are still subjective, and a player who really wants to get back on the field can find ways to game the system.”

According to the researchers, PupilScreen assess a patient’s pupillary light reflex and also features a pupilometer, which is an expensive and rarely used device that has been limited to hospitals. A smartphone’s flash stimulates the eyes and the video camera records a three second video. The video is processed through deep learning algorithms that detect which pixels are related to the pupil in each video frame and measures the changes in pupil size across the frames.

In a small study, 48 results from patients with traumatic brain injury and healthy people were analyzed. Clinicians were able to differentiate between brain injuries and healthy brains with almost perfect accuracy using just the app.

Concussions are caused by a bump, blow or jolt to the head or body that can cause the brain to move quickly within the skull. About 1.6 million to 3.8 million sports and recreation-related concussions happen in the U.S. alone each year, according to the Brain Injury Research Institute. Football accounts for more than 60% of concussions. Signs of concussion can include headache, nausea, fatigue, confusion or memory problems, sleep disturbances and mood changes.

Currently, athletes are asked where they are, to repeat a list or words, to balance and to touch a finger to their noses when they are suspected of having a concussion. Those are subjective tests. PupilScreen is designed to creative objective and clinically relevant data that can be used by anyone to determine if someone needs to be further looked at for concussions or other brain injuries.

“PupilScreen aims to fill that gap by giving us the first capability to measure an objective biomarker of concussions in the field,” Lynn McGrath, the study’s co-author, said. “After further testing, we think this device will empower everyone from Little League coaches to NFL doctors to emergency department physicians to rapidly detect and triage head injury.”

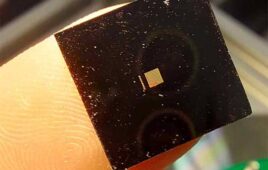

The researchers are currently working on fine-tuning their machine learning neural network to create similar results from their original idea of using a 3D printed box to control light exposure to the eye to just the smartphone.

“The vision we’re shooting for is having someone simply hold the phone up and use the flash. We want every parent, coach, caregiver or EMT who is concerned about a brain injury to be able to use it on the spot without needing extra hardware,” said lead author Alex Mariakakis.

The algorithms the team designed can differentiate between the pupil and the iris, which, without matching learning, involves annotating about 4,000 images of the eyes by hand. Using a computer qualifies subtle changes in the pupillary light reflex that the human eye cannot see.

“Instead of designing an algorithm to solve the specific problem of measuring pupil response, we moved this to a machine learning approach — collecting a lot of data and writing an algorithm that allowed the computer to learn for itself,” said co-author Jacob Baudin.

Researchers on the project are working to find partners that want to conduct more field studies of the app.

The study was funded by the National Science Foundation, the Washington Research Foundation and Amazon Catalyst.

(See the best minds in medtech live at DeviceTalks West, Dec. 11–12 in Orange County, Calif.)