Will the next generation of surgical robots be able to compensate for patient movements, or avoid collisions with unexpected hands or other objects that block their anticipated path of motion? Although this demonstration of collaborative robotics actually occurred at the ATX East automation conference that shares the cavernous Jacob K Javits Convention Center with MD&M this week, it provides a tangible hint at where robot-based medicine will be heading in the not-so-distant future.

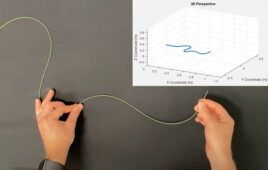

The demonstration we saw is the result of a partnership between robot maker Universal Robots and Energid, a company that develops software and machine vision systems for robots. As you can see in the video, the robot is programmed to perform a real-time adaptive pick and place task which involves taking ‘widgets’ out of a feeder and placing them into a target location. The feeder can be moved around the table and the robot, using machine vision, will track where the feeder is in order to pick the next widget. The robot also uses it artificial vision to dodge a wand while performing its task.

The same capabilities that enable robots will to avoid collisions with people in industrial settings should eventually help introduce collaborative robots into surgical theaters. Getting back to today’s applications, Universal Robots says that a system like this relaxes the requirement for precise positioning of fixtures, making setup and programming of the UR cobots even easier. Energid software is now also part of the rapidly expanding Universal Robots+ platform featuring plug&play products for the UR robot arms.