Eye surgery is a delicate and precise process. A new simulation platform based on augmented reality allows surgeons to practice surgical procedures on a virtual model in three dimensions.

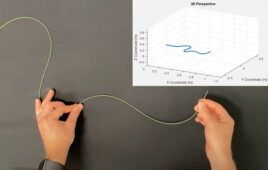

The first thing you notice when you visit Marino Menozzi’s office in Zurich is an eye the size of a medicine ball. The polystyrene model sits on a shelf behind Menozzi’s desk, but its purpose only becomes clear when you enter the lab next door. Positioned in front of a computer screen is a wooden board with two small cameras mounted at right angles to each other. Next to this is a handle with a tiny pair of forceps and bulky glasses that look like something out of Robocop. “What we have here is a simulation platform for eye surgery,” says Menozzi, a Privatdozent at the Chair of Consumer Behaviour.

Aspiring ophthalmologists have been visiting the laboratory for months, putting their skills to the test in surgery for epiretinal membrane, also known as macular pucker. This kind of operation becomes necessary when the connective tissue membrane around the vitreous of the eye becomes cloudy and creates tension on the retina. This can lead to tears which can cause severe loss of vision.

A tricky operation

The first step in the surgical procedure is to remove the transparent gel in the posterior chamber of the eye known as the vitreous body. Next, the surgeon inserts very fine forceps through a cannula with a diameter of less than one millimetre and peels away the wafer-thin membrane from the retina. If all goes well, the patient’s vision returns to normal a few weeks later. This kind of operation requires great delicacy and experience, which is why budding surgeons often spend years playing an assisting role in the procedure while gradually improving their skills by practising on plastic models and live animals. This latter approach is expensive, raises ethical questions, and does not enable surgeons to practice difficult movements repeatedly.

This is where the Robocop glasses in Menozzi’s laboratory come into play, offering trainee surgeons the opportunity to practice in a virtual environment supported by augmented reality. Put on the glasses and a virtual eye appears in front of you, hovering in the air and magnified eight times – exactly the same level of magnification that surgeons normally use when performing surgery under the microscope. Unlike virtual reality (VR), where the user can no longer see their real-life environment at all, augmented reality (AR) merely enriches reality by adding virtual elements to what is already there.

he additional virtual elements in this case are the magnified eye and instructions for the operation that appear in the field of vision, including features such as arrows, lines and circles that show the trainee surgeon which path they should ideally follow with the forceps. At the same time, they can still see the real world around the magnified eye, allowing them to see their hands and the position of the surgical instrument.

The tiny cameras on the board record every hand movement and transmit it to the glasses. The computer can use this information to precisely calculate the degree of accuracy with which the participant has performed the operation. Budding surgeons can also work through a virtual “intelligent tutoring” process based on an algorithm that calculates which stages of the operation they performed successfully and which they struggled with. This feedback is used to create a sequence of exercises that guarantees the most effective learning experience. “We hope this will improve the training effect, reducing the time it takes to get surgeons into the operating room and minimising the number of mistakes they make,” says Menozzi.

Lack of haptics and a delay

Three Master’s theses are currently exploring the benefits and acceptance of the simulation with the help of 23 aspiring surgeons. Gian-Luca Köchli is one of the participants. He is in the final year of his Master’s degree programme and has already tried the AR simulator six times. “The simulation is remarkably sensitive and precise,” he says. “I was amazed at how realistic it already is.” One thing that bothered him, however, was the slight delay between his actual movements and the images shown in the glasses. He would also like to see realistic haptics incorporated in the simulator. In general, though, practising surgery with the simulator before performing it on patients is something he sees as a promising approach.

Menozzi currently sees three key challenges. The first is the 20 to 30 millisecond delay spotted by Köchli. This occurs because the virtual images that show the hand movements must first be calculated by a computer and then sent to the glasses via a wireless LAN, and Menozzi is aware that this latency is too high. The second challenge is that some users respond to certain simulations by becoming dizzy or even vomiting. The third challenge is achieving the feeling of actually being present during the simulation. “We see this as an important indication of how well the results of the simulation can be transferred to reality,” Menozzi says. At present, for example, users still have a severely restricted field of vision. Healthy human eyes have a natural field of view of around 200 degrees, while the mixed reality glasses only offer a visual field of 35 degrees. Achieving a realistic sensation would require a 120 degree field of view. The sense of reality could also be enhanced by sounds, smells and haptics.

“App store” for simulations

Sandro Ropelato, who developed the simulator as part of his doctoral thesis, believes that the technology’s potential extends far beyond eye surgery. Other complex and risky surgical procedures could also be modelled for AR training simulations in the future, enabling surgeons to practice all their hand movements on a virtual model before operating on a patient. He also envisages applications in the realm of electronics, such as preparing silicon wafers for microchips, for example.

Ropelato’s longer-term vision includes a kind of app store that would offer a variety of simulations for his hardware set-up of the HoloLens, micro-cameras and computer. The 3D simulation is based on Unity, a leading game development platform that has also become the number one choice for a whole host of AR and VR applications. That means thousands of software components are available over the Internet, which simply need to be adapted to each particular application. In the medium term, Ropelato and Menozzi are planning to create a spin-off to market the simulation platform. “But we need to learn a lot more from our users before we do that,” Menozzi says. “Their acceptance of this product is the key to our success.”