Confusion matrix, with true (and false) positives and negatives (as well as usual artifacts, e.g., hair). (Credit: Florida Atlantic University)

Too much, too little, just right. It might seem like a line from “Goldilocks and the Three Bears,” but actually describes an important finding from researchers in Florida Atlantic University’s College of Engineering and Computer Science. They have developed a technique using machine learning – a sub-field of artificial intelligence (AI) – that will enhance computer-aided diagnosis (CADx) of melanoma. Thanks to the algorithm they created – which can be used in mobile apps that are being developed to diagnose suspicious moles – they were able to determine the “sweet spot” in classifying images of skin lesions.

This new finding, published in the Journal of Digital Imaging, will ultimately help clinicians more reliably identify and diagnose melanoma skin lesions, distinguishing them from other types of skin lesions. The research was conducted in the NSF Industry/University Cooperative Research Center for Advanced Knowledge Enablement (CAKE) at FAU and was funded by the Center’s industry members.

Melanoma is a particularly deadly form of skin cancer when left undiagnosed. In the United States alone, there were an estimated 76,380 new cases of melanoma and an estimated 6,750 deaths due to melanoma in 2016. Malignant melanoma and benign skin lesions often appear very similar to the untrained eye. Over the years, dermatologists have developed different heuristic classification methods to diagnose melanoma, but to limited success (65 to 80 percent accuracy). As a result, computer scientists and doctors have teamed up to develop CADx tools capable of aiding physicians to classify skin lesions, which could potentially save numerous lives each year.

“Contemporary CADx systems are powered by deep learning architectures, which basically consist of a method used to train computers to perform an intelligent task. We feed them massive amounts of data so that they can learn to extract meaning from the data and, eventually, demonstrate performance comparable to human experts – dermatologists in this case,” said Oge Marques, Ph.D., lead author of the study and a professor in FAU’s Department of Computer and Electrical Engineering and Computer Science who specializes in machine cognition, medical imaging and health care technologies. “We are not trying to replace physicians or other medical professionals with artificial intelligence. We are trying to help them solve a problem faster and with greater accuracy, in other words enabling augmented intelligence.”

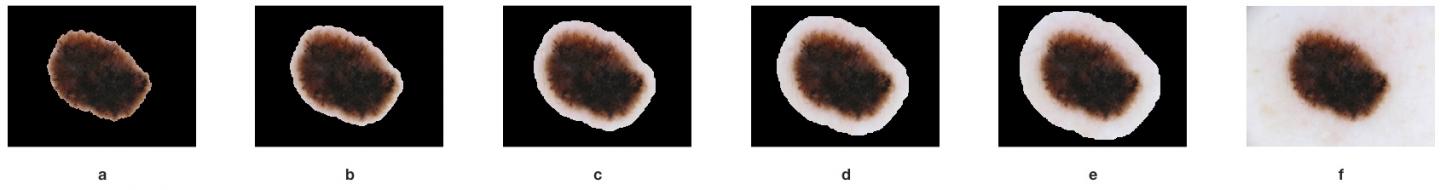

Images of skin lesions often contain more than just skin lesions – background noise, hair, scars, and other artifacts in the image can potentially confuse the CADx system. To prevent the classifier from incorrectly associating these irrelevant artifacts with melanoma, the images are segmented into two parts, separating the lesion from the surrounding skin, hoping that the segmented lesion can be more easily analyzed and classified.

“Previous studies have produced conflicting results: some research suggests that segmentation improves classification while other research suggests that segmentation is detrimental, due to a loss of contextual information around the lesion area,” said Marques. “How much we segment an image can either help or impede skin lesion classification.”

With that in mind, Marques and his collaborators Borko Furht, Ph.D., a professor in FAU’s Department of Electrical and Computer Engineering and Computer Science and director of the NSF-sponsored CAKE at FAU; Jack Burdick, a second-year master’s student at FAU; and Janet Weinthal, an undergraduate student at FAU, tested their hypothesis: “How much segmentation is too much?”

To test their hypothesis, the researchers first compared the effects of no segmentation, full segmentation, and partial segmentation on classification and demonstrated that partial segmentation led to the best results. They then proceeded to determine how much segmentation would be “just right.” To do that, they used three degrees of partial segmentation, investigating how a variable-sized non-lesion border around the segmented skin lesion affects classification results. They performed comparisons in a systematic and reproducible manner to demonstrate empirically that a certain amount of segmentation border around the lesion could improve classification performance.

Their findings suggest that extending the border beyond the lesion to include a limited amount of background pixels improves their classifier’s ability to distinguish melanoma from a benign skin lesion.

“Our experimental results suggest that there appears to be a ‘sweet spot’ in the degree to which the surrounding skin included is neither too great nor too small and provides a ‘just right’ amount of context,” said Marques.

Their method showed an improvement across all relevant measures of performance for a skin lesion classifier.