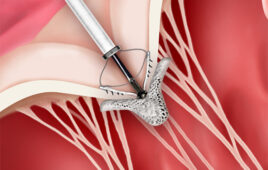

Researchers have developed a prototype for an MR imaging-compatible robot designed to enable neurosurgeons to remove brain tumors.

Using maggots to eat away dead human tissue may not seem to have much in common with neurosurgery, but the age-old idea was the inspiration for a trio of researchers to combine robotics and real-time MR brain imaging to treat brain tumors.

Photos of the Day: Tumor Targeting Robot

Several years ago, J. Marc Simard, M.D., a professor and neurosurgeon at the University of Maryland School of Medicine, Baltimore, happened to be watching a television program on the medical use of sterile maggots. Finding it “fascinating,” Dr. Simard thought the idea could work with brain tumors—minus the maggots.

“The idea was to develop a very tiny robot that could remove diseased areas while leaving healthy tissue untouched, which of course is one of the major challenges with removing brain tumors,” Dr. Simard said.

Dr. Simard joined with other experts—Rao Gullapalli, Ph.D., associate professor of diagnostic radiology at the University of Maryland, and Jaydev Desai, Ph.D., an associate professor of mechanical engineering at the University of Maryland, College Park—to develop the prototype for a minimally invasive neurosurgical intracranial robot (MINIR) they hoped could one day be a huge aid to neurosurgeons in removing difficult-to-reach brain tumors.

Initially funded in part by a 2006 National Institutes of Health (NIH) grant, the team developed MINIR over a number of years and determined its feasibility. In 2012, the team secured a $2 million National Institute of Biomedical Imaging and Bioengineering (NIBIB) grant to continue developing MINIR-II, a fully MR imaging-compatible robot designed to enable neurosurgeons to reach and remove brain tumors, with the goal of greatly improving outcomes for these patients.

“The clinical issue is quite simple,” Dr. Gullapalli said. “Current neurosurgery practices—especially those involving resection of deep-seated tumors—are in some sense blinded.”

Dr. Gullapalli received a $25,000 Research & Education (R&E) Research Seed Grant in 1999 at the University of Maryland for the project, “Regional Cerebral Blood Flow at Rest & Task Dependent Activation in the Supplementary Motor Cortex: Correlating Baseline Perfusion MRI with Functional MRI.”

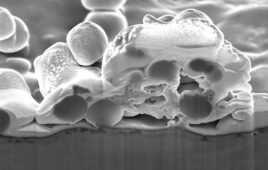

Based on imaging performed before the surgery, surgeons open the skull and make a large incision in the brain to create a line of sight that allows them to extract the tumor. “The problem is that you can never be quite sure you’ve removed enough of the tumor,” Dr. Gullapalli explained. “And because new tissue moves in to replace the tissue that’s been removed, there is a risk of removing some of the healthy tissue.”

The procedure may have to be repeated if the tumor isn’t completely removed, making it potentially very cumbersome and drawn out—not a “high-confidence surgery,” Dr. Gullapalli said. But by dropping the tiny robot down into the tumor area and using MR imaging guidance, surgeons are able to remove the tumor without injuring nearby healthy tissue.

Robotics and MR Imaging Form Critical Union

Dr. Gullapalli, whose area of expertise is MR, and Dr. Desai embarked on the project based on their experience using robots to perform breast biopsies. “Moving from breast to neuro is a huge leap,” he said.

Moreover, marrying robotics and MR imaging to create this new device brought with it a huge challenge—developing a robot that could safely be used inside an MR imaging scanner. “It’s not just the type of material, but its shape that matters,” Dr. Gullapalli said. “The shape of any object will deflect the magnetic field somewhat and create image distortion, so we had to work out these details.”

Above all, the device had to be small, Dr. Desai said. “We needed something we could maneuver like a finger, but with more freedom,” he said. The actuator—the device that drives the robot—must also be compatible with MR imaging and create enough force to move the robot joint and perform tasks like electro-cauterizing tissue. The latest prototype contains a plastic robot body and shape memory alloy spring actuators that actually sit apart from the robot’s body.

Another challenge, Dr. Gullapalli said, is “that you actually have to drive this thing.” He compares it to driving a car—the operator wants to know what’s in front, behind and on his sides. And that’s where MR imaging comes into play.

“As this device moves and chisels out part of a tumor, the surgeon needs to make sure he’s not inadvertently going to hit normal tissue,” he said. “Real-time image guidance is critical, so we have to have sensors built into the robot to tell us its location.”

In that way, Dr. Gullapalli said, the scanner can provide the robot—or the surgeon operating the device—images letting it know what lies in front, behind and on its sides, “in real time and on demand.”

Researchers working to further develop the device by reducing image distortion and testing its safety and efficacy say bringing the device to clinical trial is probably five-to-10 years away. “This is a long-term project,” Dr. Gullapalli said.